AI Driven UX Design for Financial Services

Designing Predictive Tools for Confident Retirement Planning and Servicing Platform:

Integrating AI into Client's Digital Experience.

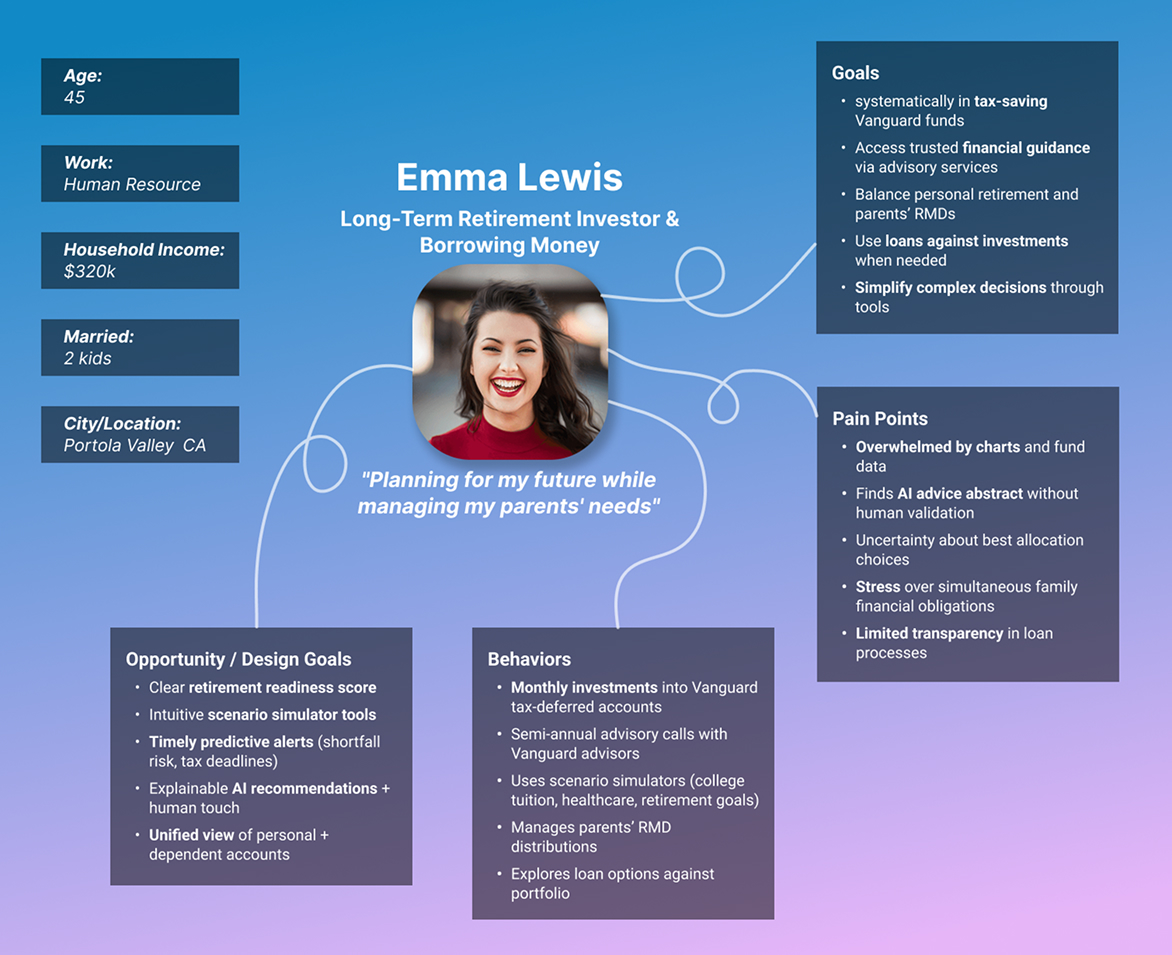

Setting the Scene

Adam Bronson

“I feel like I’m guessing about my future. Every time I log in, I’m worried I’m behind.”

“I wish I had a clear view of my retirement contributions per account to know how much more I can invest to maximize tax savings.”

Amanda Blake

Nate Polia

“When I borrow from my retirement account, I can’t see how it impacts my investments, penalties, or payoff details in relation to my on-going retirement savings.”

This was the heart of the problem. People had the discipline to save and repayment but not the confidence to know if they were okay.

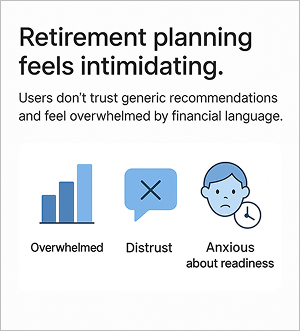

The Challenge

We set out to answer:

How might we help investors see where they stand without overwhelming them?

Could we use AI responsibly to give them clarity, not just more data?

How could we ensure every recommendation complied with SEC and FINRA regulations, balancing innovation with strict advisory rules?

I knew this meant more than adding new features, it meant designing transparent, predictive tools that felt trustworthy and empowering.

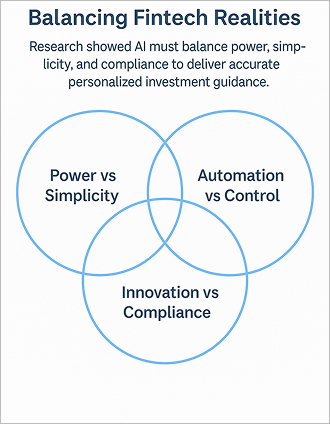

Navigating the Unknown

This was my third time designing AI-powered product designs for self-servicing and forecasting in a regulated finance servicing context.

Early on, I faced three unique challenges:

1. Power vs. Simplicity

AI could project thousands of scenarios but most users just wanted an answer to “Will I be okay?”

2. Automation vs. Control

People liked guidance but still wanted to feel in charge.

3. Innovation vs. Compliance

Any projections or recommendations needed to meet strict regulatory standards. We couldn’t use language that implied personalized fiduciary advice, and every disclaimer had to be legally approved.

I kept coming back to one principle:

If users don’t trust or understand the AI driven recommendations, it doesn’t matter how smart the AI is.

What We Tried

To tackle these challenges, I led a process built around:

1. Deep listening

2. Iterative design

3. Tight collaboration with compliance, engineering, PMs and client advisory team

What We Tried

Deep Listening

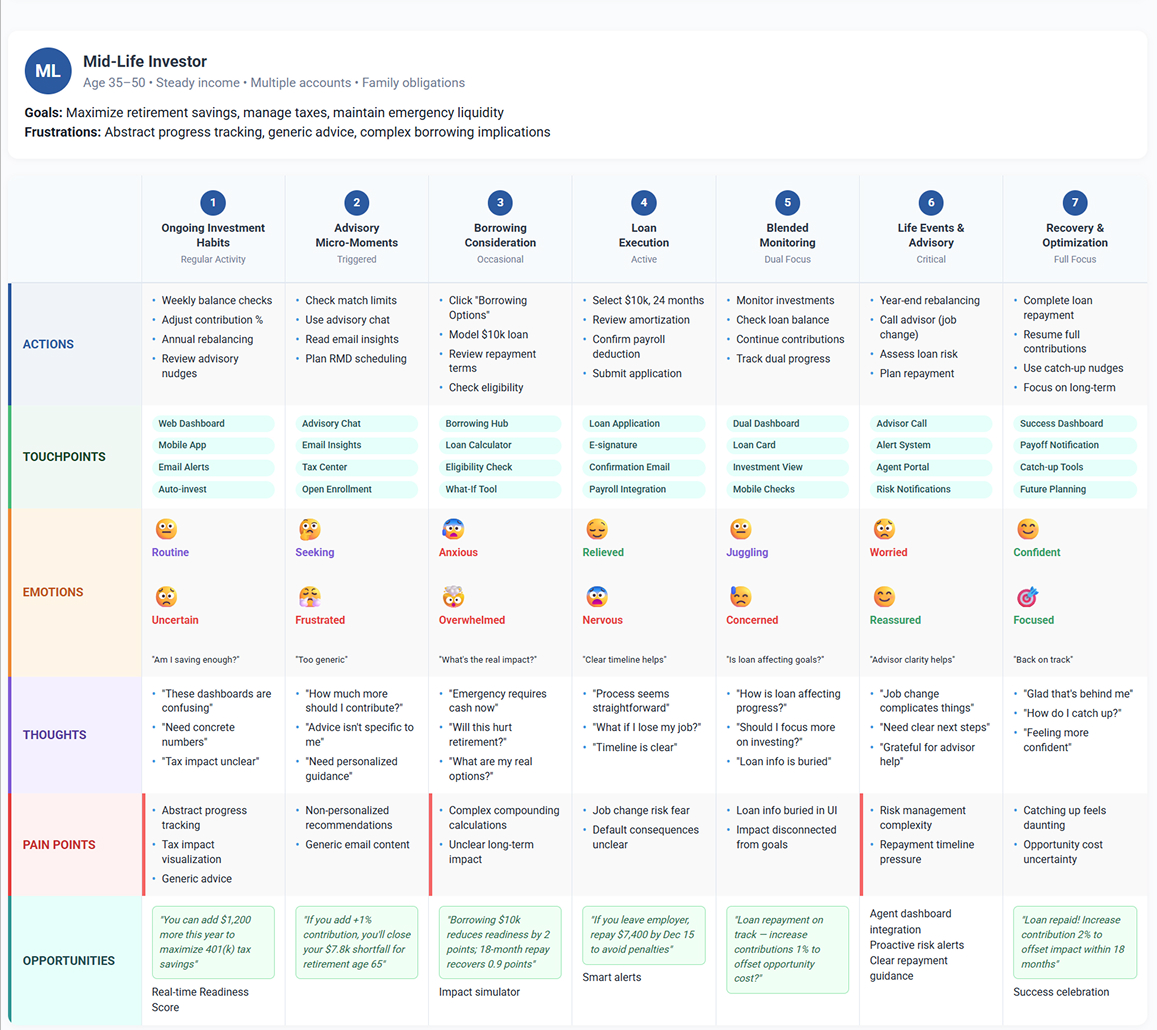

We began by mapping the journey of investors:

Checking balances on a mobile app in the evenings.

Feeling anxiety seeing static charts they didn’t fully grasp.

Ignoring generic recommendations because they seemed arbitrary.

One moment stuck with me: A user looked at the projections and said,

Dylan Matthews

“I don’t know what any of this means for me personally.”

Insight like these shaped everything that followed.

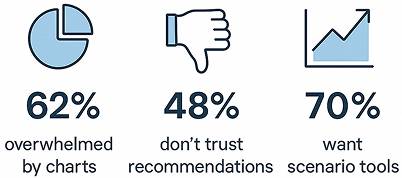

Research Highlights

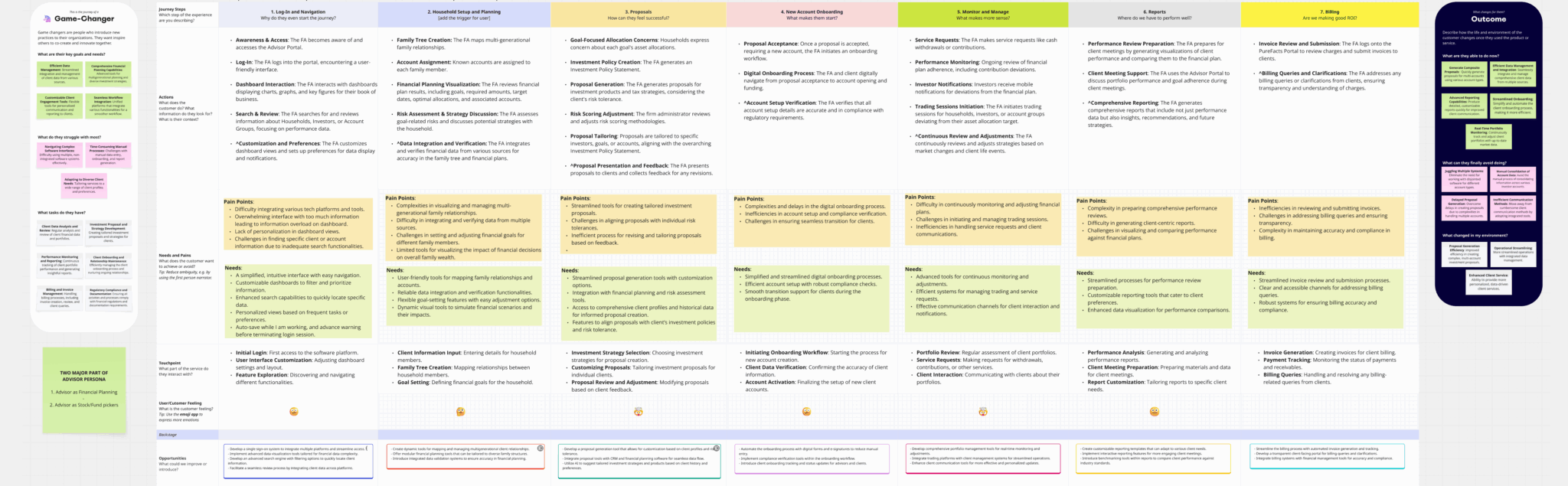

What We Tried

Discovery and Mixed Methods Research

To understand how investors manage retirement planning and occasional borrowing within Client’s ecosystem, I used a mixed-methods approach:

Quantitative:

I reviewed analytics on portal usage, contribution patterns, and call center logs, where borrowing was among the top three inquiries.

Qualitative:

I conducted in-depth user interviews with investors, advisors, and service agents, along with usability and diary studies.

Contextual Inquiry:

I observed users as they navigated the retirement dashboard and borrowing flows to spot challenges in real tasks.

Key Research Insights:

Kyle Bennett

“I’m not sure if I’m saving enough each year.”

Users want a single readiness score that brings together contributions, market conditions, and life events.

“I want to try things out safely.”

Investors want scenario simulators to test changes in contributions, retirement ages, or borrowing options without worrying about making mistakes.

Logan Pierce

Emily Foster

“Borrowing makes me nervous because I can’t see how it will affect me in the long run.”

Investors want to model how loans will impact future balances, tax savings, and RMDs before they commit.

“It’s tough to find the right information in one place.”

Participants said they have to switch between several tabs to check balances, loan status, and contribution history.

Kyle Bennett

Logan Pierce

“I don’t trust general recommendations.”

Users expect AI-driven suggestions to be clear, showing which inputs lead to the advice.

“Advisors and agents don’t see what I see.”

Service staff do not have the same context, which makes customers repeat their stories during escalations.

Megan Riley

Zachary Wells

“The system doesn’t adjust to my milestones.”

Users want proactive reminders, like when they are close to contribution limits, facing RMD deadlines, or after borrowing.

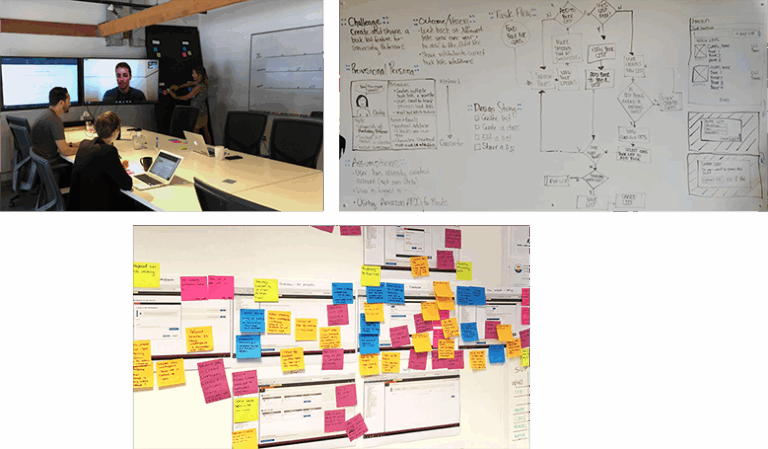

What We Tried

Designing for Clarity and Confidence

I led design workshops to reimagine how predictive tools could help users feel in control.

I created low-fidelity prototypes of:

Retirement Readiness Score dashboards that visualized preparedness in simple terms.

Interactive scenario simulators where users could adjust inputs like retirement age and monthly contributions and see instant recalculations.

Personalized recommendation modules suggesting small changes with big impact.

Predictive alerts that proactively warned about shortfalls or tax implications.

From the beginning, I worked closely with our Legal and Compliance partners to ensure every recommendation:

Used language consistent with regulatory guidelines.

Included clear disclaimers.

Avoided promising outcomes or implying fiduciary advice.

What We Tried

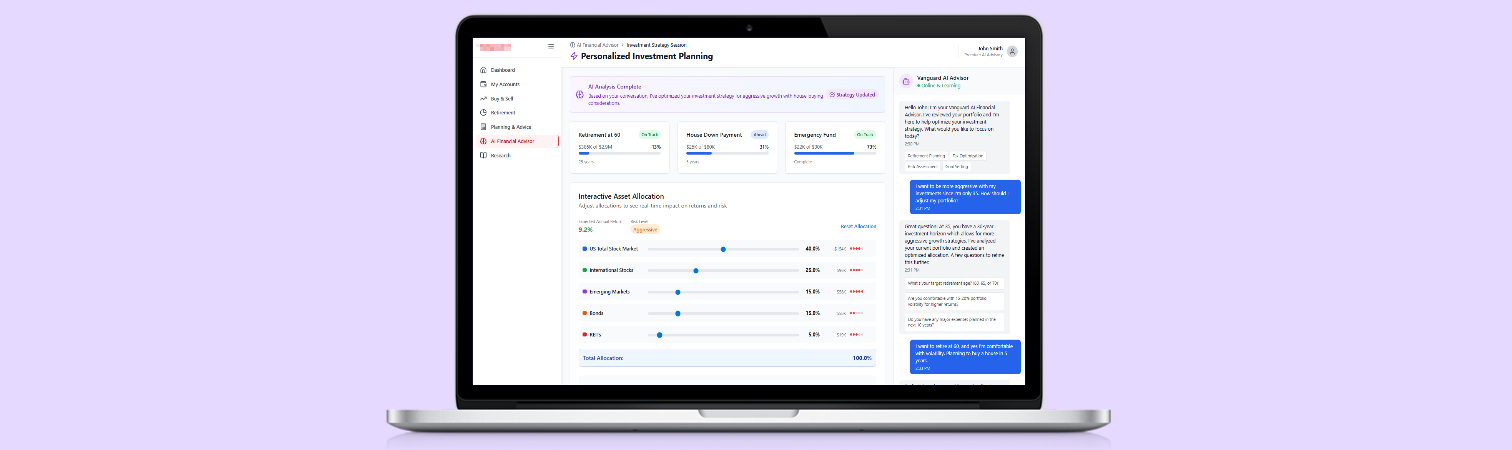

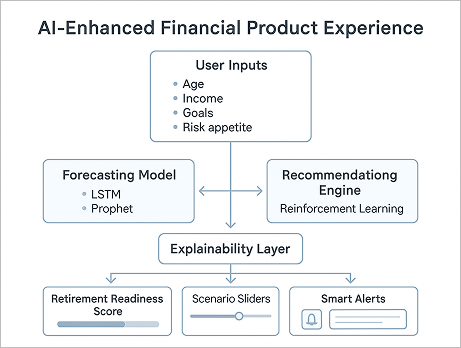

Bringing Designs and AI to Life

I partnered with data scientists and product managers to integrate:

Forecasting models projecting future retirement income under different scenarios.

A recommendation engine suggesting optimized contribution strategies.

Explainability overlays so users could understand why they were seeing certain suggestions.

Key design principles:

Show projections visually, not as tables of numbers.

Provide clear indication where AI is at play on the UI.

Allow users to explore “what if” scenarios with sliders and toggles.

Based on your current savings rate, target retirement age, and market assumptions.

Always give users options to adjust, save, or ignore recommendations

What We Tried

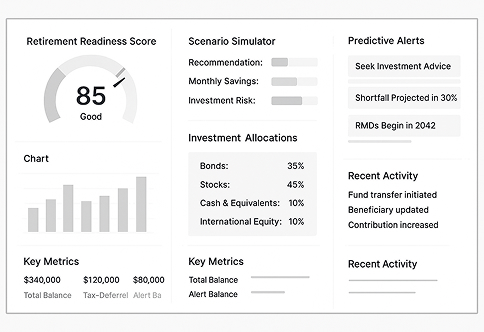

Low-Fidelity - Investor Dashboard

What We Tried

High-Fidelity - Investor Dashboard (Concept)

What We Tried

High-Fidelity - AI Driven Investment Planning (Concept)

The Moment It Clicked

Midway through testing, a participant used the scenario simulator to see the impact of retiring three years earlier and increasing contributions by 5%.

She paused and said:

Emma Randall

“I’ve never been able to see this so clearly. This makes me feel like I have a plan.”

That was the moment I knew we were solving the right problem.

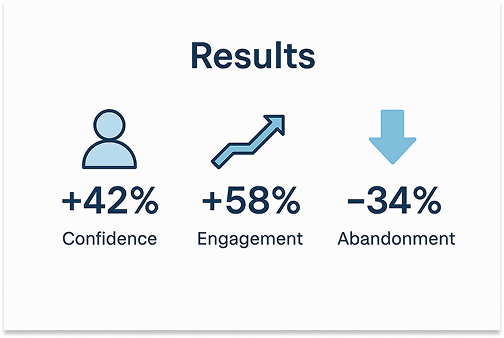

Impact

After three rounds of testing and iteration, our new experience achieved:

42% increase in confidence planning for retirement.

58% more engagement with recommendations.

34% reduction in users abandoning projections mid-task.

38% increase in self-servicing that resulted in $250,000 customer services cost.

More importantly, we saw a shift in how people felt less anxious, more empowered.

What I Learned

This project taught me:

People don’t trust black-box algorithms and generic solution without having human-in-the-loop.

Interactivity builds confidence. When users can explore scenarios themselves, they feel ownership over their plan.

Regulatory clarity shapes good design. Partnering with Compliance from day one not only kept us safe but improved credibility.

Always bring agent/human-in-the-loop if user is not finding information in one or two attempt.

Small moments of clarity when employing AI can change how people feel about their interaction with planning and servicing.

Reflection

Looking back, I’m proud we didn’t settle for generic charts.

We designed predictive tools that made people feel supported in one of the most important decisions of their lives.

This work also deepened my experience balancing AI innovation with the strict regulatory environment of financial services.

My Role

Led end-to-end research, ideation, and prototyping.

Facilitated workshops to define the AI product vision, product prioritizations, VMPs and Agile setup.

Partnered with Data Science and Engineering to align model capabilities with user needs.

Collaborated with Compliance and Legal to ensure regulatory compliance.

Delivered high-fidelity prototypes and detailed design specifications.

Help design leadership define servicing standards over web, mobile, voice, chat, content strategies, bot and servicing personas, and overall conversational design practice.

Mentored seniors and junior designers, provided them training of servicing designs for web and conversations, gave constructive feedback with hands-on examples.

Closing Thought

In a world where AI is everywhere, I believe the most powerful thing we can design is care, understanding and trust while designing servicing experiences.